However you feel about artificial intelligence (AI) — and, in particular, about the large language models and chatbots that are powered by it — the reality is that humanity is currently building and expanding infrastructure to support it. This includes large networks of power-demanding and water-requiring data centers that are being constructed, often conflicting with the electricity and water needs of the humans who live in those locations. It’s because of these concerns that some have floated the idea of AI data centers in space, with one company, SpaceX, recently announcing plans to build a literal megaconstellation of one million satellites to further that ambition.

Is this an example of an emerging technology that could provide an off-world solution to the problem of competing demands for limited resources? Or is it, like the hyperloop, an example of grift: where the concept itself isn’t exactly physically impossible, but is rendered so impractical due to the actual physical constraints of the endeavor, that it absolutely cannot materialize as advertised? It turns out that there are several challenges to building a functional network of AI data centers in space. Those challenges come on several fronts: economically, from an engineering perspective, and constrained by the laws of physics themselves.

Of the five big obstacles, three might yet be solved by technological developments. The last two, however, are set by the physics of the Universe itself, and are likely to be dealbreakers for the entire endeavor.

Credit: SpaceX/rawpixel

5.) The prohibitive launch costs of satellites.

Two of the most important technological advances in recent years have come in the field of rocketry:

- the ability to safely land and reuse rockets for launching satellites into orbit,

- and the associated lowered launch costs of catapulting mass into low-Earth orbit.

The original launch vehicles for satellites used by the United States was the Vanguard rocket, which worked out to a cost of about $1,000,000 per kilogram. Given that a typical satellite payload is around 800 kg (1760 pounds), those prices were close to a billion dollars (in today’s dollars) per launch in the early days of spaceflight. Over the subsequent decades, however, those launch costs dropped precipitously.

During the Space Shuttle era, that cost dropped to around $50,000 per kg. In the 2010s, as private companies like Arianespace and SpaceX came to the forefront, launch costs dropped to below $10,000 per kg for the first time. At the present time, with reusable rockets that require little maintenance (other than refueling) between launches, launch costs are finally coming down to approach the $1000 per kg milestone. With vehicles from nation-states like Russia and China, as well as private companies like Rocket Lab, SpaceX, Arianespace and others, launch costs are no longer prohibitive. In fact, they’re likely to continue to drop over time, with the largest launch vehicles capable of carrying the heaviest payloads leading to the lowest overall costs at present.

This one, although often cited as a drawback to data centers in space, is likely the most easy obstacle to overcome simply through continued improvements due to economies of scale.

Credit: NASA

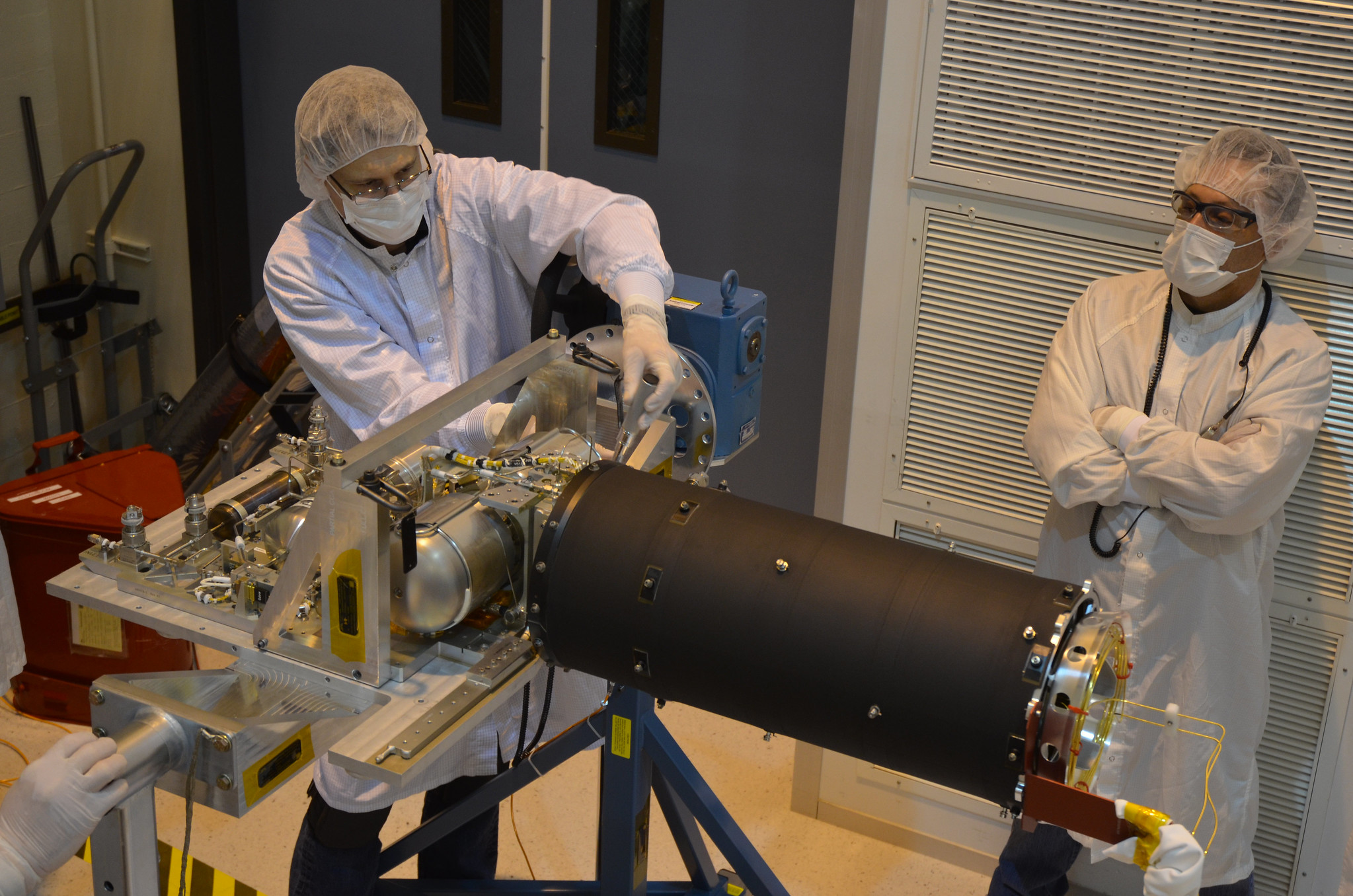

4.) The inability to repair or upgrade satellites in space.

This objection, raised by OpenAI CEO Sam Altman, is also largely an economic one. AI data centers are required to be optimized for computationally intensive tasks, including:

- the training and running of AI and machine learning models,

- the parallel processing demands of AI/LLM workloads,

- utilizing high bandwidth memory as well as GPUs and TPUs,

- and requiring high speed interconnects.

These specialized AI data centers require not only these specialized computer chips and architectures, but are extremely power intensive. Whereas most computers use CPUs (central processing units) to perform the majority of their computations, the specialized GPUs (graphical processing units) and TPUs (tensor processing units) used in AI data centers use several times as much energy per computer as a standard CPU would.

In fact, on a per-server-rack basis, as of December 2025, the average AI data center used 60 or more kilowatts of power, as opposed to 5-10 kilowatts for a standard data center. Although power is an issue (we’ll get to that further down the list), a key concern is maintenance, as components wear out over time and must be replaced.

Here on Earth, there’s a whole pipeline for identifying which components need to be replaced or repaired and when, with continuous monitoring transforming maintenance from a reactive endeavor to a predictive one. Load levels, temperatures, events, alarm histories, battery status and more are all monitored in real-time, and once any measured parameter strays outside of normal levels, the urgency of an onsite intervention can be quantified and acted upon. At least, that’s how we do it now: on the ground.

In space, we can have those same sensors, but problems can only be fixed if they fall into one out of two categories. If there’s a problem that can be solved remotely, such as with a system reboot, a new set of software commands, or the automated rerouting of certain hardware systems, then we can take those actions for an AI data center in space just as we could here on Earth. However, if there’s a problem that requires someone (whether human or robot) to travel to the site to conduct repairs or component replacements, that cannot be fixed in space.

Therefore, there will be many problems in space that, once they appear, will require a new (replacement) satellite to fix the problem with the original. That, again, is just an economics argument against AI data centers in space; with large enough numbers of satellites and a sufficient cadence of launches, this obstacle, on its own, may not be cost-prohibitive.

Credit: Courtney Celley/USFWS

3.) Providing power to these satellites.

This one is a bit more problematic, as the process of power generation is hard. Here on Earth, we typically derive power from combustion reactions, where the chemical energy stored in molecules is released as bonds are broken and new bonds are formed in the reaction. We can also use nuclear reactions, particularly through nuclear fission, to generate energy on Earth. Additional options include using wind, solar, or hydroelectric power to produce energy. But not all of these methods work once you leave the environment of Earth, with its natural resources like water, air, and the solid ground. We really have only two ways to power devices in space at present:

- you can use solar panels, and collect energy from the Sun from within the vacuum of space,

- or you can use a radioactive isotope thermoelectric generator, where radioactive material provides energy as your source material decays.

Because radioactive isotopes are difficult to make, they’re typically only used for deep space missions: to the gas giant worlds and beyond. Therefore, we have no real alternative to solar power for large constellations of satellites. Because there’s a maximum efficiency that solar panels can reach (currently topping out at about 20%), that means the way to get larger and larger amounts of power is simply to build a series of large-enough solar panels that are within mission feasibility to provide that essential energy.

For the power equivalent of a single AI data center server rack, or 60 kilowatts, that would require about a 16 meter (53 foot) by 16 meter (53 foot) square of solar panels, given the Earth-Sun distance and the efficiency of those solar panels. For comparison, as you can see above, the International Space Station currently holds the record for largest solar array in space, with its numerous solar panels putting out about 120 kilowatts of power in direct sunlight. That’s only approximately double the power needed for just a single AI data center server rack’s worth of energy outputted over time.

For a large solar array, the panels must be foldable or able to be rolled and unfurled, otherwise they wouldn’t be able to fit in the launch vehicle. An array of one million satellites, as proposed, would take the energy capacity of this megaconstellation up to 60 GW, which represents about 3% of the total global energy generation through solar. Gathering this enormous amount of energy is a tremendous task, and that’s why there are currently a whole slew of new off-grid power plants being built: to provide that needed energy to the AI data centers that are being constructed here on Earth. Because of the rare elements needed to build these panels, and the specialized restrictions that apply to solar panels in space, an entire new set of industries would need to be developed and scaled up to make this work: from mining to manufacturing to production to assembly and more. Its feasibility, at the present, is highly unclear.

2.) Cosmic ray errors.

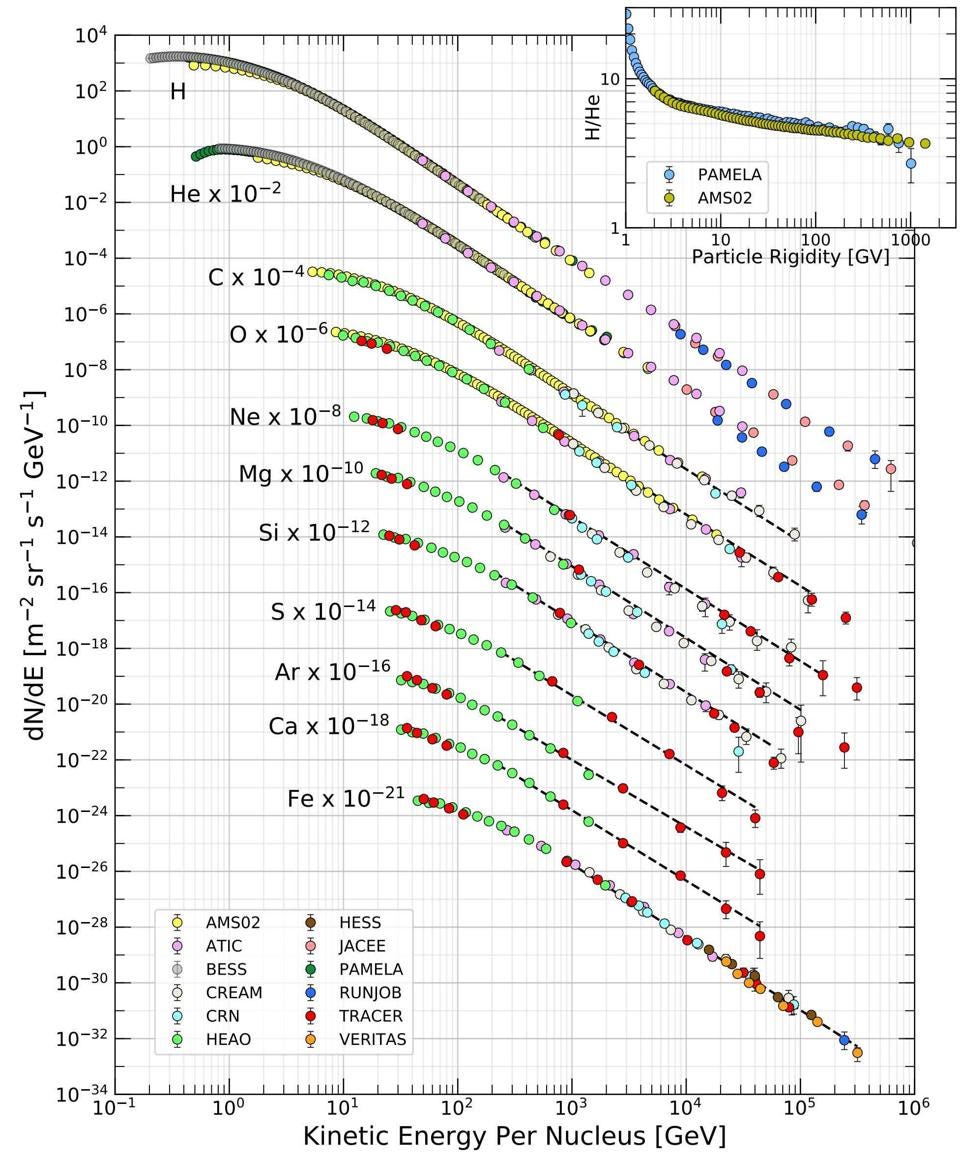

Now, we begin to move from “technological problems” to “these are problems that arise from the laws of physics themselves.” From the Sun, from stars, from white dwarfs, neutron stars, black holes, accretion disks, and all other forms of hot, accelerated matter, a set of fast-moving particles emerge: cosmic rays. These cosmic rays are all charged particles, being mostly made up of protons, with helium nuclei, electrons, positrons, a rare antiproton, and heavier atomic nuclei making up the rest. They typically move close to the speed of light, but here on Earth, they rarely affect us in our day-to-day activities.

There are two reasons for that:

- our planet’s magnetic field has a protective effect, mostly funneling these particles away from Earth except in a couple of rings toward the poles, which are the same locations where aurorae frequently appear,

- and our atmosphere has a large amount of “stopping power” when it comes to these cosmic rays, causing them to produce large particle showers that dissipate the energy, ensuring that the secondary cosmic rays that do hit Earth’s surface are low in energy.

When cosmic rays strike a data-containing electronic storage device, if they get absorbed, what they most often do is cause a single bit to “flip” inside those electronics, turning a 0 to a 1 or a 1 to a 0 in the process.

Credit: Osaka Metropolitan University/Kyoto University/Ryuunosuke Takeshige

This can be disastrous for a computational application that requires that all mathematical operations be performed correctly. “Flipping a bit” might not sound like a big error, but it can be the difference between 2+3=5 and 2+3=37, or it can be the difference between your bank account balance being positive or negative. In the context of a large language model, it can be the difference between a correct translation and a mistranslation, a correct medical diagnosis or an incorrect diagnosis, or the difference between a venomous or a non-venomous snake. The consequences of such an error, in the context of a computation where there is no cross-checking in place, can range from unnoticeable to catastrophic.

In space, there is no atmosphere to protect your satellites, and the Earth’s magnetic field offers little protection as well. Unless you plan on having doubly or triply redundant AI data centers (doubling or tripling your costs) in space, you won’t have a way to guard against these types of errors; once a bit is flipped, it remains so, and there’s no way to detect it without having backup, redundant systems to check it against. While these types of errors are exceedingly rare here on Earth, they happen all the time in space, and no amount of physical shielding or other protective measures can stop it. When they occur in space, and they inevitably will, there needs to be a better defense against the computer returning a wholly incorrect answer because of a bit-flip error.

Cosmic rays are real, and the larger and more complex these spaceborne AI data centers become, the more susceptible to these errors they will be.

Credit: Michael Kappel/flickr

1.) The problem of cooling.

This is the big one: the big problem with trying to operate a system in space that requires a large amount of power consumption. How will you keep it from overheating, melting down, suffering from performance degradation and heat-induced damage, and ultimately, from shorting out?

Here on Earth, we have two big things that help us out: the ambient atmosphere, where air conducts heat away from hot sources, sometimes aided by fans and increased airflow, and the copious presence of liquid water on our surface, where water cooling is much more efficient than air cooling.

In fact, if you were to stand outside on a cold day, exposed to the air, you’d lose body heat relatively quickly the colder it was. If you moved from the air into a bath of water at the same temperature, you’d lose your heat dozens of times more quickly, which is why hypothermia is such a risk for those who enter bodies of water in near-freezing conditions. (It’s also why flamingos benefit from standing on one leg rather than two, as it better retains their body heat when they’re in the water.) It’s those interactions with molecules that efficiently transport heat away from a hot source. The greater the rate of those interactions, the quicker heat is transported away.

Credit: NASA/JPL-Caltech

But in space, there’s none of that. You can only cool your spacecraft, overall, through the process of radiation. Even if you have a coolant system on board your spacecraft, that can only transport heat from one location to another. Keeping one part of the system cold means another part gets even hotter, and that part can only shed heat in one way: by radiating it away. Heat radiation is slow, inefficient, and quite frankly insufficient for cooling such a power-intensive system made of sensitive electronics.

The cost of insufficient cooling is easy to quantify:

- thermal errors,

- short circuits,

- broken connections between components,

- and the eventual melting of the most heat-sensitive parts, such as lead solder.

When electronics get too hot, they fail. When you use a lot of power, you produce a lot of heat energy as an inevitable by-product. If you do this in space, you cannot use air cooling or water cooling, you can only cool through radiation. And there just isn’t a way to passively cool a 60 kilowatt AI data center rack quickly enough to avoid the problems and associated costs of insufficient cooling.

There’s a common refrain that you should never bet against an innovator, particularly when they already have a track record of things that were previously said to be impossible. But the only thing that’s truly impossible is to defy the physical laws that govern reality at a fundamental level. As Scotty from Star Trek: The Original Series famously pleaded to Captain Kirk, “Ye cannae change the laws of physics.” Until there’s a robust method for addressing the problem of overheating that is bound to arise with an AI data center, we can predict exactly when and how all such endeavors will fail.

This article The 5 biggest obstacles to AI data centers in space is featured on Big Think.